- Back

- Products

Development Framework & Tools

Qt Framework

Cross-platform software libraries and APIs

Qt Development Tools

Qt Creator IDE and productivity tools

Qt Design Studio

UI Design tool for UI composition

Qt Quality Assurance

for Qt projects

Qt Digital Ads

Digital advertising for UI apps

Qt Insight

Usage intelligence for embedded devices

Quality Assurance Tools

Squish

GUI test automation

Coco

Code coverage analysis

Test Center

Test results management and analysis

Axivion Static Code Analysis

Software static code analysis

Axivion Architecture Verification

Software architecture verification

-

More

Qt 6

The latest version of Qt.

Licensing

Make the most of Qt tools, with options for commercial licensing, subscriptions, or open-source.

Qt Features

Explore Qt features, the Framework essentials, modules, tools & add-ons.

Qt for Python

The project offers PySide6 - the official Python bindings that enhance Python applications.

-

- Solutions

-

Industry & Platform Solutions

Qt empowers productivity across the entire product development lifecycle, from UI design and software development to quality assurance and deployment. Find the solution that best suits your needs.

-

Industry

-

Platform

Automotive

Micro-Mobility Interfaces

Consumer Electronics

Industrial Automation

Medical Devices

Desktop, Mobile & Web

Embedded Devices

MCU (Microcontrollers)

Cloud Solutions

-

More

Next-Gen UX

Insight into the evolution and importance of user-centric trends and strategies.

Limitless Scalability

Learn how to shorten development times, improve user experience, and deploy anywhere.

Productivity

Tips on efficient development, software architecture, and boosting team happiness.

-

- Resources

-

Our Ultimate Collection of Resources

Get the latest resources, check out upcoming events, and see who’s innovating with Qt.

-

Development Framework & Tools

-

Quality Assurance Tools

Qt Resource Center

Qt Blog

Qt Success Stories

Qt Demos

QA Resources

QA Blog

QA Success Stories

-

- Learn

-

Take Learning Qt to the Next Level

A wealth of Qt knowledge at your fingertips—discover your ideal learning resource or engage with the community.

-

Learn with us

Qt Academy

Qt Educational License

Qt Documentation

Qt Forum

-

- Support

-

We're Here for You—Support and Services

Whether you're a beginner or a seasoned Qt pro, we have all the help and support you need to succeed.

-

Quality Assurance: Future-Proof Your Software Development

Analyze. Automate. Accelerate. Assure Quality.

No matter your development approach, we have comprehensive quality management tools for your entire software lifecycle.

If you have any questions, we are here to help.

Contact Us Buy QASophisticated Quality Assurance Tools That Exceed Your Expectations

Cover all the phases of the product development process and simplify across design, development, quality assurance, and go-to-market - our tools set you up for continuous improvement and maintainability.

Test Center

CONTROL. Centralized test result management platform connecting automation with the entire dev process.

Axivion Static Code Analysis

ACCURACY. Next-generation static code analysis that checks your software for style violations.

Axivion Architecture Verification

STRUCTURE. Automated architecture check that guarantees necessary conformance.

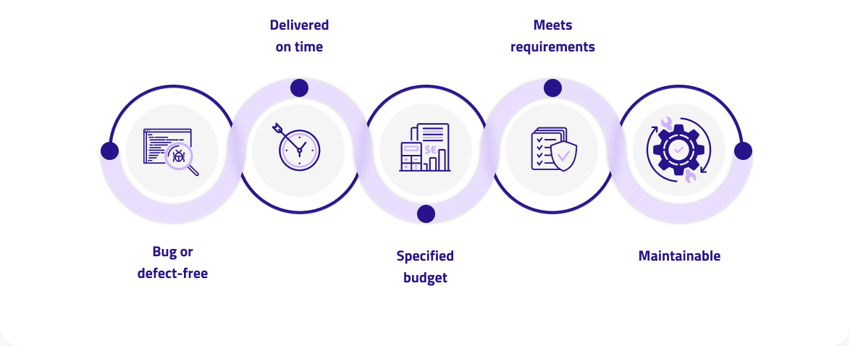

We Cover Each Pillar of Software Quality Management

Our comprehensive quality assurance product portfolio enables you to automate your testing, accelerate your software lifecycle, and most importantly, ensure quality. Combine those elements and you save time, cost, and enable growth.

What's New in Quality Assurance Management

Always taking you to the next level. Check out our latest updates and releases from our entire product offering.

Squish 7.2

The latest Squish version adds support for Qt for WebAssembly, improved screenshot verification, simplifies debugging with interactive insights into application contexts and much more.

Coco 7.0.0

Our latest Coco update brought to you the highly anticipated test data generation feature — Say Hello to Coco Test Engine!

Test Center 3.3

Test Center 3.3 is finally here and the team is thrilled to share with you the three major improvements that come with this release.

Axivion 7.7

Our latest release covers 100% of the MISRA C:2023 and the majority of the MISRA C++:2023 rules, offers enhanced Qt-specific security rules and more.

Success Stories

Learn more about how our customers have benefitted from integrating our products into their software development process. For a full list of success stories from various industries, please visit our QA Resource Center.

ABB

Assured with Squish

“I can program in Python and even import my own libraries in the tests. That’s where it’s handy.”

Jarkko Peltonen

Test Automation Specialist at ABB

Schaeffler

Assured with Axivion

“The complexity of automotive embedded software is further increased by software components with different ASIL requirements. With the ISO 26262 certified Axivion Suite, Schaeffler Automotive Buehl maintains the high quality of its mixed ASIL systems. Automated architecture verification reduces manual testing work and therefore creates free capacities for new developments in electromobility.”

Schaeffler Automotive Buehl GmbH & Co. KG

Skyguide

Assured with Squish

“What’s really key for us, having to do end-to-end integration testing and not normally having access to all the source code, is a tool like Squish that can talk to an application on Linux and one on Windows…it provides exactly what we need.”

Skyguide

Apex.AI

Assured with Axivion

“We have evaluated several static analysis tools, and Axivion Suite clearly stood out in our tests. The tool performed best in terms of AUTOSAR C++14 coverage and convinced us through its ease of use, control flow, and data flow analysis, and report generation. Axivion Suite has already become a mainstay component in our development workflow and a valuable component of our DevOps pipeline.”

Dejan Pangercic

CTO and Co-Founder of Apex.AI

Siemens Healthineers

Assured with Axivion

“Thanks to the support during implementation and the excellent support provided by the Professional Services Team, it proved possible to integrate the Axivion Suite into our development environment quickly and easily. There are virtually no architecture violations now; instead, we have a higher standard of architecture-compliant code – across all our development teams, worldwide.”

Sven Neuberg

Software Developer Computed Tomography at Siemens Healthcare GmbH

Visit our QA Resource Center

Find success stories, webinars and documents for Squish, Coco, Test Center and Axivion

Qt Group includes The Qt Company Oy and its global subsidiaries and affiliates.